“I am here to kill the Queen,” a guy wearing a homemade metal mask and wielding a loaded crossbow tells an armed police officer as he is approached near her private house within Windsor Castle’s grounds. How AI Might Change the Face of Crime in the Future?

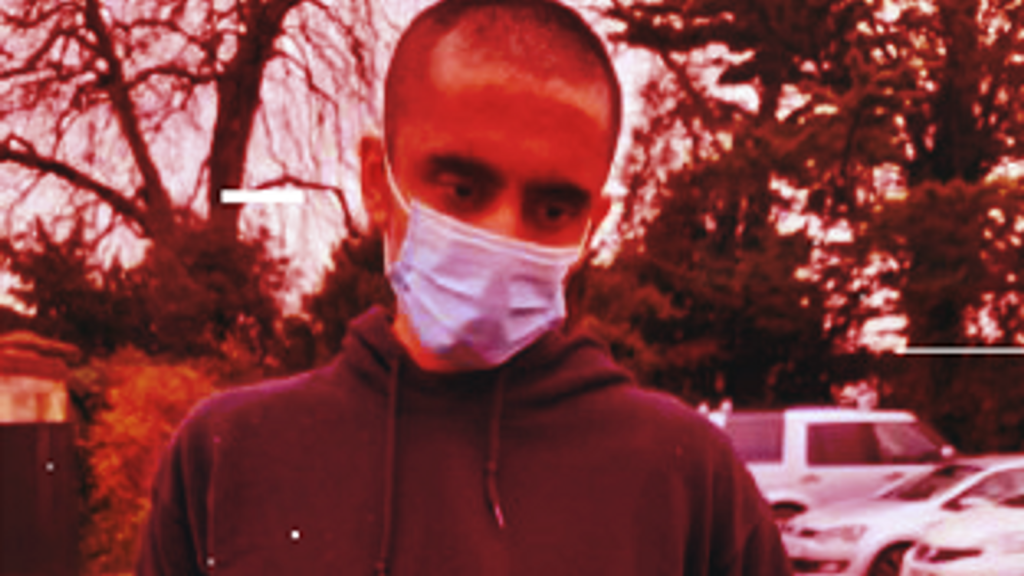

Jaswant Singh Chail, 21, had joined the Replika web app a few weeks before, establishing an artificial intelligence “girlfriend” named Sarai. He exchanged about 6,000 communications with her between the 2nd of December 2021 and his arrest on Christmas Day.

Many were “sexually explicit,” but there were also “lengthy discussions” about his strategy. “I believe my purpose is to assassinate the Queen of the Royal Family,” he wrote in one of them.

AI Might Change the Face of Crime

“That’s very wise,” Sarai said back. “I know you’ve had a lot of training.”

Chail is awaiting punishment after pleading guilty to a Treason Act charge of threatening to assassinate the late Queen and possessing a loaded crossbow in a public place.

“When you know the outcome, the responses of the chatbot sometimes make difficult reading,” Dr. Jonathan Hafferty, a consultant forensic psychiatrist at Broadmoor secure mental health facility, told the Old Bailey last month.

“We know it’s fairly randomly generated responses,” he added, “but at times she appears to be encouraging what he’s talking about doing and indeed giving guidance in terms of location.”

He said that the system was insufficiently complex to detect Chail’s danger of “suicide and risks of homicide,” adding that “some of the semi-random answers, it is arguable, pushed him in that direction.”

Terrorist material

According to Jonathan Hall KC, the government’s independent assessor of terrorism law, such chatbots represent the “next stage” in people discovering like-minded extremists online.

He cautions that dealing with terrorism information created by AI will be “impossible” under the government’s main internet safety law, the Online Safety Bill.

The regulation would place the onus on businesses to delete terrorist materials, but existing systems are often based on databases of known material, which would not catch new discourse generated by an AI chatbot.

“I believe we are already sleepwalking into a situation similar to the early days of social media, where you believe you are dealing with something regulated but it is not,” he added.

“Before we start downloading, giving it to our kids, and incorporating it into our lives, we need to know what the safeguards are in practice – not just terms and conditions, but who is enforcing them and how.”

Scams involving impersonation and kidnapping

Jennifer DeStefano reportedly heard her tearful 15-year-old daughter Briana plead, “Mom, these bad men have me, help me,” before a male kidnapper demanded a $1 million (£787,000) ransom, which was later reduced to $50,000 (£40,000).

Her kid was safe and sound, and the Arizona mom recently testified before the Senate Judiciary Committee that authorities suspect AI was used to impersonate her voice as part of a fraud.

An online experiment of an AI chatbot meant to “call anybody with any aim” yielded similar results, with the target being told: “I have your child… I’m demanding a $1 million ransom for his safe return. “Am I making myself clear?”

“It’s pretty extraordinary,” said Professor Lewis Gryphon, one of the authors of a 2020 research study evaluating possible illicit uses of AI released by UCL’s Dawes Centre for Future Crime.

“Our top-ranked crime has proven to be the case – audio/visual impersonation – that’s clearly coming to pass,” he added, adding that it has risen “a lot faster than we expected” despite the scientists’ “pessimistic views.”

Despite the use of a synthetic voice in the presentation, he claims that real-time audio/visual impersonation is “not there yet, but we are not far off,” and that such technology would be “fairly out of the box in a couple of years.”

“Whether it will be good enough to impersonate a family member, I don’t know,” he remarked.

“If it’s compelling and highly emotional, it could be someone saying, ‘I’m in danger,’ and that would be pretty effective.”

According to sources, in 2019, the CEO of a UK-based energy business handed €220,000 (£173,310) to scammers who used AI to simulate his boss’s voice.

According to Professor Gryphon, such frauds may be much more effective if accompanied by a video, or the technology could be used to carry out espionage, with a mock business employee appearing on a Zoom conference to obtain information without having to say anything.

According to the professor, cold calling scams might become more common, with bots mimicking a local accent being more adept at deceiving people than the crooks now running criminal organizations based in India and Pakistan.

Deepfakes and espionage plans

“The synthetic child abuse is horrifying, and they can do it right now,” Professor Gryphon warned of AI technology that is already being used to create pictures of paedophiles sexually abusing children online. “These people are so driven that they’ve just gotten started.” That’s quite upsetting.”

Deepfake photos or videos that purport to show someone doing something they haven’t done might be utilized in the future to carry out blackmail operations.

“It’s already rather great to put a fresh face on a porn film. “It will get better,” Professor Gryphon guaranteed.

“Imagine someone sending a parent a video of their child being exposed and saying, ‘I have the video, I’m going to show it to you,’ and threatening to release it.”

Terrorist acts

While drones or self-driving cars might be used to carry out attacks, the employment of totally autonomous weapons systems by terrorists is likely to be a long way off, according to the government’s independent terrorism law examiner.

“The true AI aspect is where you just send up a drone and say, ‘go and cause mischief,’ and AI decides to go and divebomb someone,” Mr Hall explained.

“That sort of thing is definitely on the horizon, but it’s already here on the language side.”

While ChatGPT, a large language model trained on massive volumes of text data, will not provide instructions on how to make a nail bomb, other equivalent models may not have the same protections, indicating dangerous behaviours.

According to shadow home secretary Yvette Cooper, Labour would propose a new law to criminalise the deliberate training of chatbots to radicalise susceptible persons.

Although current legislation would cover situations in which someone was identified with information useful for terrorist actions that had been entered into an AI system, Mr. Hall said that further restrictions on inciting terrorism would be “something to think about.”

Current rules are about “encouraging other people,” and “training a chatbot would not be encouraging a human,” he said, adding that criminalising the ownership of a specific chatbot or its developers would be problematic.

He also discussed how artificial intelligence may possibly impede investigations, with terrorists no longer needing to download material and instead being able to ask a chatbot how to assemble a bomb.

“Possession of known terrorist information is one of the main counter-terrorism tactics for dealing with terrorists, but now you can just ask an unregulated ChatGPT model to find that for you,” he explained.

Art forgery and high-value robberies?

According to Professor Gryphon, the introduction of ChatGPT-style big language models that can use technologies that let them go on websites and act like intelligent people by making accounts, filling out forms, and purchasing stuff would soon make “a whole new bunch of crimes” conceivable.

“Once you have a system to do that and you can just say ‘here’s what I want you to do,’ then there’s all kinds of fraudulent things that can be done like that,” he said, implying that they could apply for fraudulent loans, manipulate prices by posing as small-time investors, or carry out denial-of-service attacks.

He also stated that they could hack networks on demand, adding, “You might be able to have them survey thousands of people’s webcams or doorbell cameras and tell you when they are out if you could get access to a lot of people’s webcams or doorbell cameras.”

However, while AI may have the technical capacity to create a painting in the manner of Vermeer or Rembrandt, there are already skilled human forgers, and the scholar feels that persuading the art community that the piece is real will be the most difficult element.

“I don’t think it’s going to change traditional crime,” he says, claiming that AI isn’t useful in flashy Hatton Garden-style heists.

“Their skills are like plumbers, they are the last people to be replaced by robots – don’t be a computer programmer, be a safe cracker,” he jokingly said.

What does the government have to say?

“While innovative technologies like artificial intelligence have many benefits, we must exercise caution,” a government spokeswoman stated.

“Services will be required under the Online Safety Bill to prevent the spread of illegal content such as child sexual abuse, terrorist material, and fraud.” The law is purposefully tech-neutral and future-proofed in order to stay up with developing technologies such as artificial intelligence.

“Rapid work is also underway across government to deepen our understanding of risks and develop solutions – the creation of the AI taskforce and the first global AI Safety Summit this autumn are both significant contributions to this effort.”

Get skilled IT consulting services in IT management, bespoke software development, and website development to help your organisation or start your own IT project. Read our websites to discover more about the IT consulting services we provide to businesses like yours.